Introduction

Tavern Brawl is one of the most popular and unique Hero Realms community events. It is a team-based competition where each team has 5 members playing different classes and ancestries. If you’re unfamiliar with the format the Tavern Brawl article explains it quite well. One of the most interesting parts of the competition is the match making phase where teams determine the 5 match-ups for the week. Ideally this phase would have the following properties:

- Explainability: The process is easy to explain, understand and follow.

- Fairness: On average we should minimize the advantage of going first (or second)

- Fast: We should minimize the number of back-and-forth steps in the matchmaking process

- Agency: The match-ups should be the result of team’s choices (rather than random)

- Fun: We should design a system where players aren’t getting hard-countered every week.

- Variety: A wide variety of team comps are competitively viable.

Investigating the Current Method

The first step would be to determine how good the current method is at satisfying these goals. I started by writing a program to simulate a round-robin tournament with 9 teams that my team, the Storm Bloopers, was considering this season. Those teams were:

| Team Name | Class 1 | Class 2 | Class 3 | Class 4 | Class 5 |

| Team 1 | Wizard | Alchemist | Fighter | Cleric | Necromancer |

| Team 2 | Wizard | Alchemist | Fighter | Cleric | Ranger |

| Team 3 | Wizard | Alchemist | Fighter | Cleric | Druid |

| Team 4 | Wizard | Alchemist | Cleric | Thief | Monk |

| Team 5 | Wizard | Alchemist | Fighter | Barbarian | Ranger |

| Team 6 | Wizard | Fighter | Barbarian | Necromancer | Ranger |

| Team 7 | Wizard | Alchemist | Fighter | Cleric | Monk |

| Team 8 | Wizard | Alchemist | Fighter | Ranger | Monk |

| Team 9 | Wizard | Alchemist | Fighter | Cleric | Barbarian |

This tournament gave every team the opportunity to go first and second in matchmaking against every other team and used the min-max algorithm to determine the optimal matchmaking outcome for each week. The win rates were pulled from Hero Helper data and ancestries were ignored. The teams were awarded the expected value of points from the match-ups (the average number of points you would score if you played the matches a thousand times). For example the first match for team 1 was against team 2 and resulted in:

Team 1 (P1) vs. Team 2 (P2) | Score: 1.680 for Team 1 Optimal Pairings: - Alchemist vs. Fighter - Necromancer vs. Alchemist - Cleric vs. Wizard - Wizard vs. Cleric - Fighter vs. Ranger

The results of the tournament were:

--- Turn Advantage Analysis --- Total points scored by the first-turn team: 111.397 Total points scored by the second-turn team: 104.603 --- Final Standings --- Team 7 : 25.300 points Team 5 : 24.701 points Team 1 : 24.450 points Team 9 : 24.414 points Team 8 : 24.367 points Team 4 : 23.887 points Team 6 : 23.608 points Team 3 : 23.531 points Team 2 : 21.743 points

The difference between total points for first and second position teams gives us a good measure of how fair this method of match making is and the similarity between team scores gives us some measure of variety.

Back and forth steps: 5

Turn order unfairness: 3.1%

Team score standard deviation: 0.95

This is actually pretty good. We know from experience that matchmaking with 5 steps typically takes no more than 2 days leaving plenty of time to complete the matches and 3% means teams can generally do well in either first or second position if they make the right choices during matchmaking.

Trying Other Tweaks

Now that I had a program to simulate the weekly matchmaking process, I decided to try out some alternative matchmaking methods. Here are some things that I tested out:

- Give team 2 a ban: Before declaring challengers, the second position team can pick one match-up that team 1 cannot perform, for example “no sending your wizard against my cleric”. This helped team 2 way too much and made everything worse: Back and forth steps: 6. Turn order unfairness: -7.5%. Team score standard deviation: 0.99.

- Give team 2 and team 1 a ban: Same as before but team 1 gets to declare a ban when they send their first challenger. This adds a significant amount of complexity and still doesn’t end up helping with anything: Back and forth steps: 6. Turn order unfairness: 3.2%. Team score standard deviation: 0.93.

- Send 2 challengers first: Have team 1 send out 2 challengers in the first step. Team 2 then selects their counters and team 1 determines the remaining 3 matches. This was surprisingly fairly similar to the current method: Back and forth steps: 3. Turn order unfairness: 3.3%. Team score standard deviation: 0.96.

- Send 3 challengers first: Have Team 1 send out 3 challengers in the first step. Team 2 then selects their counters and team 1 determines the remaining 2 matches. This was terrible for Team 1 but actually improved deviation between team compositions: Back and forth steps: 3. Turn order unfairness: -11%. Team score standard deviation: 0.84.

- Send 3 challengers and 2 bans first: Give Team 1 2 bans and have them send 3 challengers. Team 2 then selects their counters and team 1 determines the remaining 2 matches. This seems to give team 1 the compensation that they need to even out the differential from the previous step. Back and forth steps: 3. Turn order unfairness: 0.1%. Team score standard deviation: 0.86.

Deep Dive Into the 2 Bans 3 Challengers Approach

Detailed Example

First let’s start with an example match-up:

| Team Name | Class 1 | Class 2 | Class 3 | Class 4 | Class 5 |

| Team 1 | Wizard | Alchemist | Fighter | Cleric | Necromancer |

| Team 2 | Wizard | Alchemist | Fighter | Cleric | Thief |

Team 1 begins by nominating 3 challengers and declaring 2 bans:

We are sending our Wizard, Fighter and Necromancer out. You may not send your Fighter against our Necromancer. You may not send send your Cleric against our Fighter

This results in the initial matchmaking state:

| Ban 1 | Necromancer | Fighter |

| Ban 2 | Fighter | Cleric |

| Match 1 | Wizard | ??? |

| Match 2 | Fighter | ??? |

| Match 3 | Necromancer | ??? |

| Match 4 | ??? | ??? |

| Match 5 | ??? | ??? |

Team 2 responds

We send our Alchemist to fight the Wizard, our Thief to challenge the Fighter and our Wizard to battle the Necromancer

At this point the matches look like this:

| Ban 1 | Necromancer | Fighter |

| Ban 2 | Fighter | Cleric |

| Match 1 | Wizard | Alchemist |

| Match 2 | Fighter | Thief |

| Match 3 | Necromancer | Wizard |

| Match 4 | ??? | ??? |

| Match 5 | ??? | ??? |

Finally team 1 sets the remaining 2 matches, sending their Alchemist against the opposing Cleric and their Cleric against the opposing Fighter.

| Ban 1 | Necromancer | Fighter |

| Ban 2 | Fighter | Cleric |

| Match 1 | Wizard | Alchemist |

| Match 2 | Fighter | Thief |

| Match 3 | Necromancer | Wizard |

| Match 4 | Alchemist | Cleric |

| Match 5 | Cleric | Fighter |

Analysis

Intuitively it makes sense that this method should be reasonably fair. Both teams have the ability to determine ~2.5 matches. It also allows teams to avoid the worst counters which should generally result in the matches being more fun and interesting on average. It also results in more teams being competitively viable, with player skill playing a more important role in the outcome of the tournament.

Here is an example of the previous 9 team round-robin tournament played with this new method:

--- Turn Advantage Analysis --- Total points scored by the First-Position Player: 108.133 Total points scored by the Second-Position Player: 107.867 --- Final Standings --- Team 7 : 24.950 points Team 4 : 24.925 points Team 9 : 24.406 points Team 5 : 24.298 points Team 1 : 24.090 points Team 3 : 23.936 points Team 8 : 23.898 points Team 6 : 23.638 points Team 2 : 21.859 points

What Does This Mean for Individual Classes?

This doesn’t seem to make a huge difference for any individual class. Here is a comparison of the win rate in the simulated tournament for each represented class using the current and proposed matchmaking methods:

| Class | Win Rate (Current) | Win Rate (Proposed) |

| Alchemist | 0.544 | 0.562 |

| Barbarian | 0.555 | 0.536 |

| Cleric | 0.456 | 0.438 |

| Druid | 0.506 | 0.544 |

| Fighter | 0.482 | 0.492 |

| Monk | 0.534 | 0.521 |

| Necromancer | 0.538 | 0.532 |

| Ranger | 0.452 | 0.450 |

| Thief | 0.444 | 0.457 |

| Wizard | 0.495 | 0.489 |

What If the Meta is Less Balanced?

The values from hero helper data are ignoring ancestries, which seem to amplify differences between the classes and push more matches into the realm of 70-30. To test out the effects of a less balanced meta we can try doubling the advantage each class:

win_rate = min(1, max(0, 0.5 + (win_rate – 0.5) * 2))

Even with additional skew we see that this method maintains a turn order unfairness of just 0.5%.

What About Other Team Compositions?

The teams used for the initial analysis are what our team seriously considered for the competition. As a result they are significantly more competitively viable than, say a team of randomly selected classes. To get some insight into this I created 100 teams of random classes. The only constraint I applied was there could be at most 1 of each class (I didn’t include the rule of no more than 2 beta classes). In this case the turn order unfairness for both methods remained about the same, 3.3% for the alternating current format and 0.24% for the new approach. The top and bottom teams in both approaches were roughly the same. The top team in both tournaments was Fighter, Necromancer, Druid, Alchemist, Monk. The bottom team in both tournaments had Bard, Thief, Ranger, Cleric and either Barbarian or Monk. The difference in team score standard deviation disappeared with both approaches resulting in a standard deviation of 23.1.

The new system maintains more fairness regarding turn order, but doesn’t magically make teams of weaker champions competitively viable.

What if Win Rates are Randomized?

This is an interesting question. It provides some analysis of the strength of these matchmaking systems independent of the current meta. To measure this I set up a simulated tournament between 100 random teams. Each team plays every other team both going first and second. For each pairing I completely randomized the win rate matrix. Win rates were randomly assigned between 0.2 and 0.8 while maintaining symmetry (win rate of Class A vs Class B is always 1.0 – win rate of Class B vs Class A). Overall both systems performed more poorly in a random environment. The traditional alternating method resulted in a turn order unfairness of 4.4% while the 2 bans 3 challengers method resulted in a turn order unfairness of -2.7% (the team going second has a slight advantage). These results seem stable across multiple runs of the simulation suggesting that the sample size is large enough to produce meaningful results.

--- SYSTEM 1: BAN & CHALLENGE --- Unfairness Percentage: -2.711% Metric: (Team X Score when First) - (Team X Score when Second) ------------------------------------------------------------ -0.372 to -0.337 | (7) -0.337 to -0.303 | * (22) -0.303 to -0.268 | *** (57) -0.268 to -0.233 | ******** (127) -0.233 to -0.198 | *************** (242) -0.198 to -0.163 | ************************** (424) -0.163 to -0.128 | ************************************ (582) -0.128 to -0.094 | ************************************************* (776) -0.094 to -0.059 | ************************************************** (790) -0.059 to -0.024 | ******************************************** (705) -0.024 to 0.011 | ********************************** (541) 0.011 to 0.046 | **************** (268) 0.046 to 0.080 | ************ (200) 0.080 to 0.115 | ****** (95) 0.115 to 0.150 | *** (51) 0.150 to 0.185 | * (25) 0.185 to 0.220 | * (21) 0.220 to 0.255 | (9) 0.255 to 0.289 | (2) 0.289 to 0.324 | (6)

--- SYSTEM 2: ALTERNATING MATCHMAKING --- Unfairness Percentage: 4.441% Metric: (Team X Score when First) - (Team X Score when Second) ------------------------------------------------------------ -0.030 to -0.004 | (1) -0.004 to 0.022 | ************** (203) 0.022 to 0.048 | ************************* (351) 0.048 to 0.073 | ************************************ (511) 0.073 to 0.099 | ********************************************* (637) 0.099 to 0.125 | ************************************************* (690) 0.125 to 0.150 | ************************************************** (698) 0.150 to 0.176 | ***************************************** (577) 0.176 to 0.202 | ******************************** (450) 0.202 to 0.228 | ********************** (319) 0.228 to 0.253 | ************** (209) 0.253 to 0.279 | ********* (128) 0.279 to 0.305 | ****** (90) 0.305 to 0.330 | *** (45) 0.330 to 0.356 | * (23) 0.356 to 0.382 | (13) 0.382 to 0.408 | (3) 0.408 to 0.433 | (0) 0.433 to 0.459 | (0) 0.459 to 0.485 | (2)

Developing a Matchmaking Tool

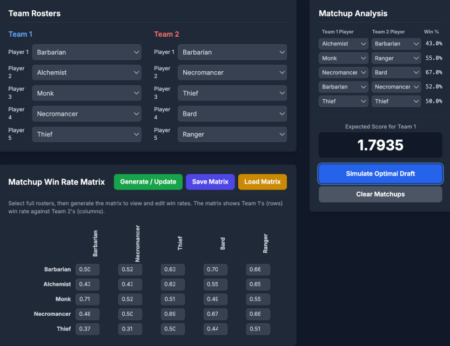

While the proposed method has fewer steps in total, team 1 is making a lot of choices right out of the gate. Being a daredevil is already challenging enough and we want to avoid making weekly matchmaking decisions even more complicated. Our team has been experimenting with a tool that allows you to input a partially filled out matchmaking state and produces the min-max optimized result with an expected value for points scored that round. It has allowed us to compare different scenarios and agree on our strategy for the week. Whether we stay with the current approach or adopt some new one, we should consider adding a similar tool to the Hero Helper website to make it easier for daredevils to make weekly match-up decisions.

Here’s an example of what this could look like for the current alternating approach:

The tool makes investigating the pairing options easier, but the matchmaking step is still a very interesting part of the tournament because:

- Estimating win percentages is actually pretty difficult and requires multiple practice matches to get it right. To do this properly you have to guess your opponents’ builds given their previous tournament performance or recent builds in other tournaments. This is where skill differential makes a big difference.

- There are usually a few decent outcomes that you are having to decide between qualitatively (sometimes this isn’t the case and you’re just threading the needle).

- You are still trying to account for team preferences and not sacrificing the same person every week.